Knowledge, Communities and AI

People are diverse and knowledge is messy

Jillian Bejtlich wrote a great explanation about why cleaning up and removing all of the mess in online communities is not ideal, especially in situations where community content is used to develop LLMs/Large Language Models that train AI. While the value of community ‘mess’ is more pronounced when viewed through the lens of AI training, the messiness of communities has always been core to their value - because people are messy. Not everyone understands or describes things in the same way.

Knowledge Is Complex

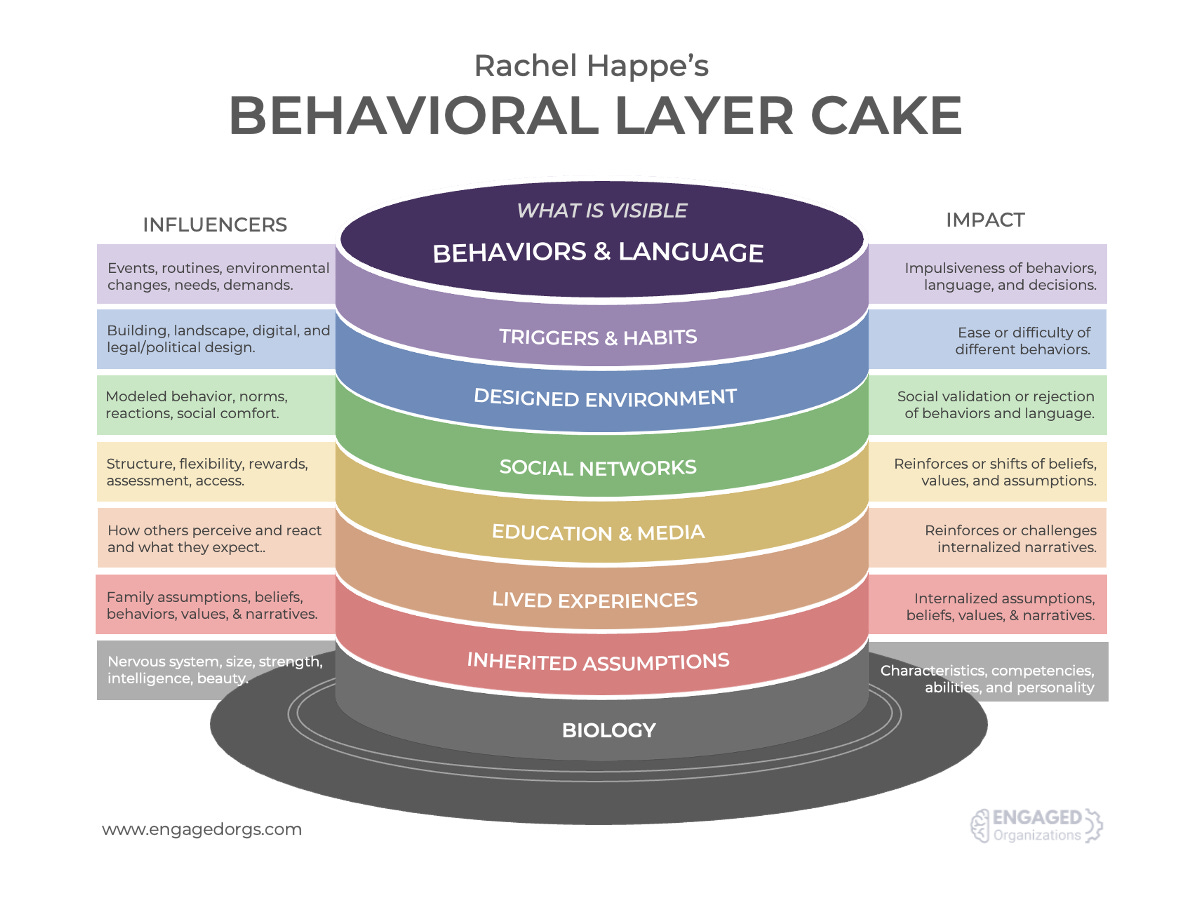

People come with persistent and temporal characteristics that make their needs at any given moment hard to anticipate. If you consider the Behavioral Layer Cake I created to describe the categories of influences on a person’s behavior, you can perhaps imagine how one individual might approach the same problem or opportunity differently on Monday than they do on Friday. On different days, a person might ask a question differently to different people and with a different emotional urgency. They might use different language. All of those things will impact the responses they get back, as well as their understanding of those answers.

I often joke that communities are massive multiplayer matching engines for people because they provide access to hundreds or even thousands of other people who also have their own persistent and temporal characteristics. At a given moment, there is likely to be one or more individuals who have the time, perspective, understanding, and ability to respond to a request with the right level of experience, empathy, detail, and perspective needed to meet the needs of someone with a question.

As a young parent, I was faced with some challenges that did not seem to resonate with the other parents I knew locally, I often turned to a small online parenting community that I trust. The members of that community offered perspectives and experiences with the process of figuring out their own children that I could not look up in an online search. That access ultimately led to identifying - and addressing - some issues that are often ignored for years until children are in crises. We avoided so much potential pain and struggle but, it was not one question, or one resource, or one solution; it was an ongoing conversation with people who had become familiar with my situation and context and who had the time, empathy, experience, and insight that matched my need.

We often think subject matter experts are the ideal people to answer questions in communities, but if the person who asks is just starting out the people who can best understand and respond to their question are people who have more recently learned what they need to know. For me, having a neuropsychiatrist answer questions about my child when I had no idea what was going on would have likely been overwhelming. Those people just ahead in their knowledge still remember what it is like to not know or lack the correct language and, critically, they remember what it felt like and can be more empathetic.

Knowledge Management is Complicated

The way we define and organize knowledge - dictionaries, encyclopedias, card catalogs, tags, file systems - is an attempt to bring some order and standardization to the chaos of language and learning. When I think about our approach to managing knowledge, I often think of a card catalog as a metaphor in which we try to put each nugget of content in the ‘correct’ place.

The perfectionism implied by this approach to knowledge management leads to some oddly ineffective approaches to maximizing the scope, value, or use of knowledge. Reams of documentation. Nested taxonomies with hundreds of categories. Systems that cull huge datasets to find the most common, rather than the most insightful, occurrences of information, something Jillian mentions in her post. In a recent conversation with Keven Heard, he seemed to suggest that what we often call knowledge management often doesn’t even address knowledge, but instead manages information. We are using technical thinking and trying to use it in ways that increase relational value. One is a complicated problem with fixed elements, and the other is a complex problem with adaptive elements. It’s not particularly effective.

AI and Effective Knowledge Development

Aside from the somewhat daft conversations about whether AI is sentient, it can be used effectively to explore options related to almost anything. In that, it can be a good thought partner by serving up different perspectives, interrogating possible consequences of using different approaches, and adapting content for those different options. It provides a quick and easy mechanism for generating alternatives, which has real value. Seeing those contrasts increases our understanding and confidence in our ultimate choices.

AI cannot, however, tell you how an email, argument, presentation, or blog post will land with an audience. It does not know your audience. It cannot adapt on the fly if you receive quizzical responses. It cannot mediate trust - or lack thereof - in relationships.

I have developed expertise in operating online community programs, and I spent over a decade presenting, writing about, researching, creating training, and building tools to help operate community programs. The more expertise I’ve amassed, the less interest I have in creating assets because it is not the most efficient way of successfully transferring what I know.

Two things are critical to knowledge adoption:

Asking Questions

Emotional Conviction

We expend huge amounts of money and time documenting knowledge but until someone asks a question, they are not actually invested in the answer. Unless people want the answer, no amount of documentation matters. Questions, and to a lesser degree search, are the observable artifacts of motivation to know the answer.

A client recently asked if I could provide documented options for community engagement routines to new community leaders. While I have those, I suggested that a better approach would be to spend a few minutes discussing the goals of each community, the capacity/interest of those facilitating them, and the types of engagement approaches that might resonate. The real issue is not the details of what they can do, it is convincing new community leaders that their job is not to be the font of wisdom, responsible for answers, or that posting lots of content will lead to engagement. It is actually quite challenging for people to hear that they cannot DO more and that to succeed, they can only do more once others have engaged. Until that time, doing more has the effect of dousing emergent sparks. Emotionally, it is challenging to wait - and rely - on other people. It doesn’t feel sufficient, and yet, without that first small step, a collaborative journey will never succeed.

Oddly, producing less but spending more time and energy discussing things is by far the more effective way to transfer knowledge. It is very often dismissed as inefficient because it lacks scale, but there is an internet full of rich documentation out there that gets a lot less collective attention than TikTok reels.

Thank you for sharing your knowledge!